Deepfakes: Identity Threats Bypassing Legacy Security Stacks

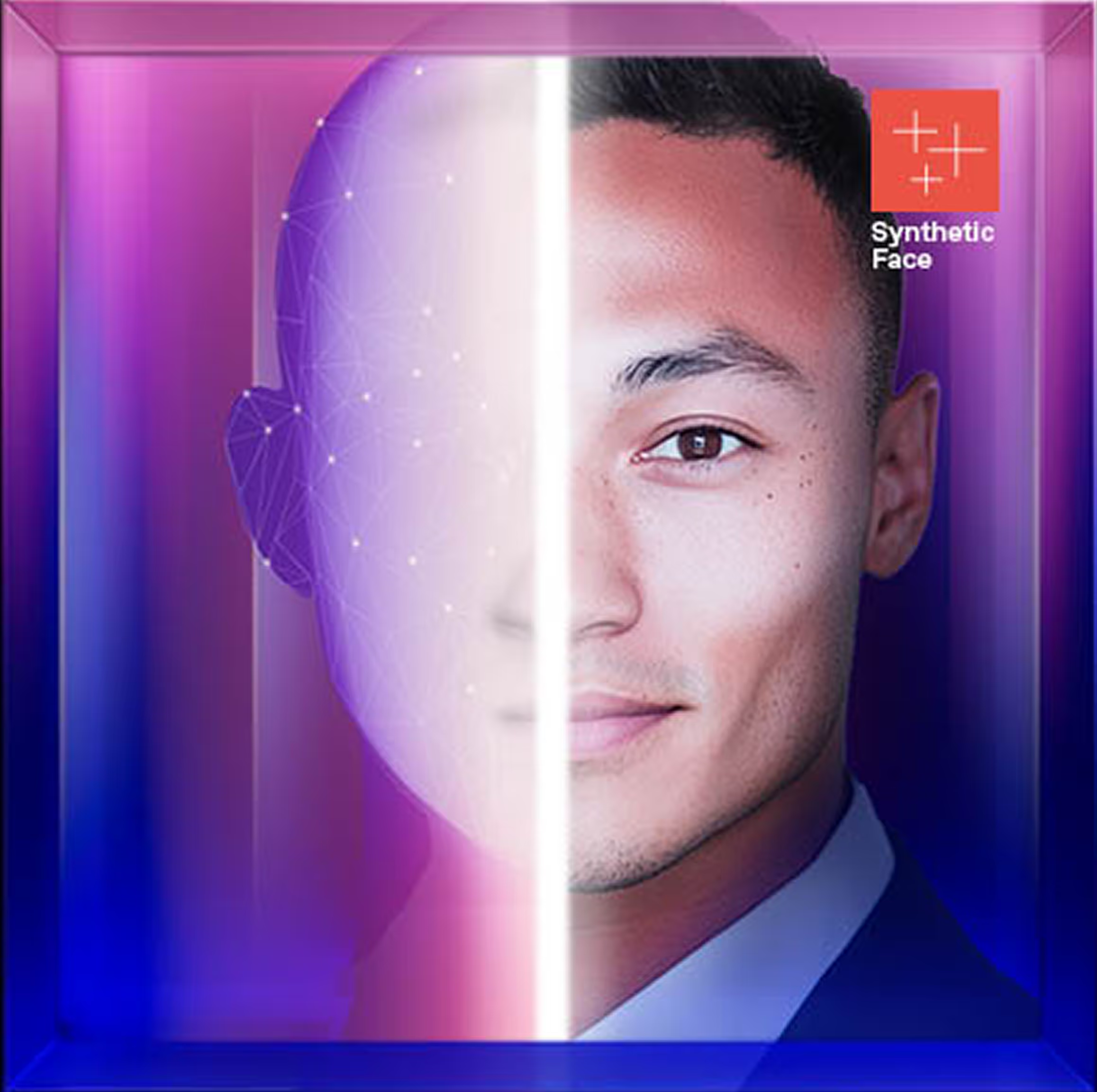

Attackers use deepfakes to manipulate help desk personnel, impersonate leadership on live calls, and bypass MFA through social engineering at the audiovisual layer.

Deepfake Security Incidents Are Causing Material Losses

Source: Industry Analysis

.avif)

Source: Industry Report

Source: FBI Advisory

What CISOs Need to Know

Identity-based attacks are bypassing your infrastructure defenses. AI-generated voice and video are being weaponized to impersonate employees and trusted partners during live interactions.

These attacks exploit assumed identity in conversations, enabling unauthorized access and fraudulent approvals where your security stack has no visibility.

When authentication happens through human judgment rather than technical validation, your security perimeter moves outside the reach of your controls.

What Prepared Security Organizations Are Doing

- Implement out-of-band verification for credential resets, access requests, and financial approvals

- Require documented evidence of identity verification for critical access decisions

- Move beyond “spot the fake” training to verification behaviors and procedural discipline

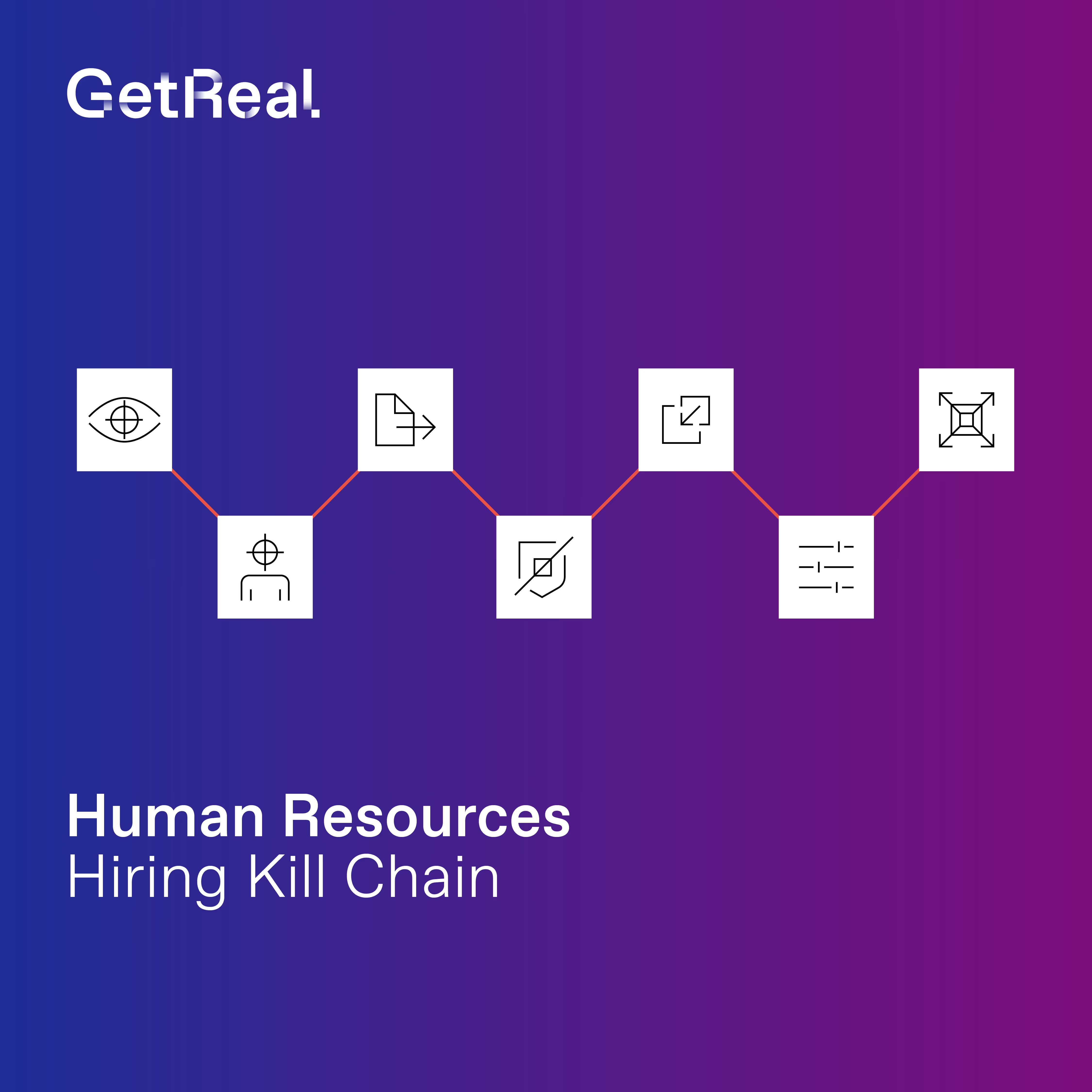

- Close gaps between HR, IT, and Finance where deepfake attacks exploit disconnected processes

- Establish playbooks for suspected deepfake incidents with immediate containment protocols

Treat deepfake-enabled identity attacks as a standing security risk. Invest in solutions that provide verification beyond what users see and hear.

Learn more by downloading our detailed guide here