Deepfakes and Imposter Candidates: HR’s New Challenges Protecting the Enterprise Front Door

Remote hiring and AI-driven impersonation have converged into an operational threat that is already occurring at enterprise scale.

Imposter Hiring Is Already Happening at Scale

Source: CrowdStrike 2025

.avif)

Source: CrowdStrike 2025

Source: Analyst Projection

What CHROs and TA Leaders Need to Know

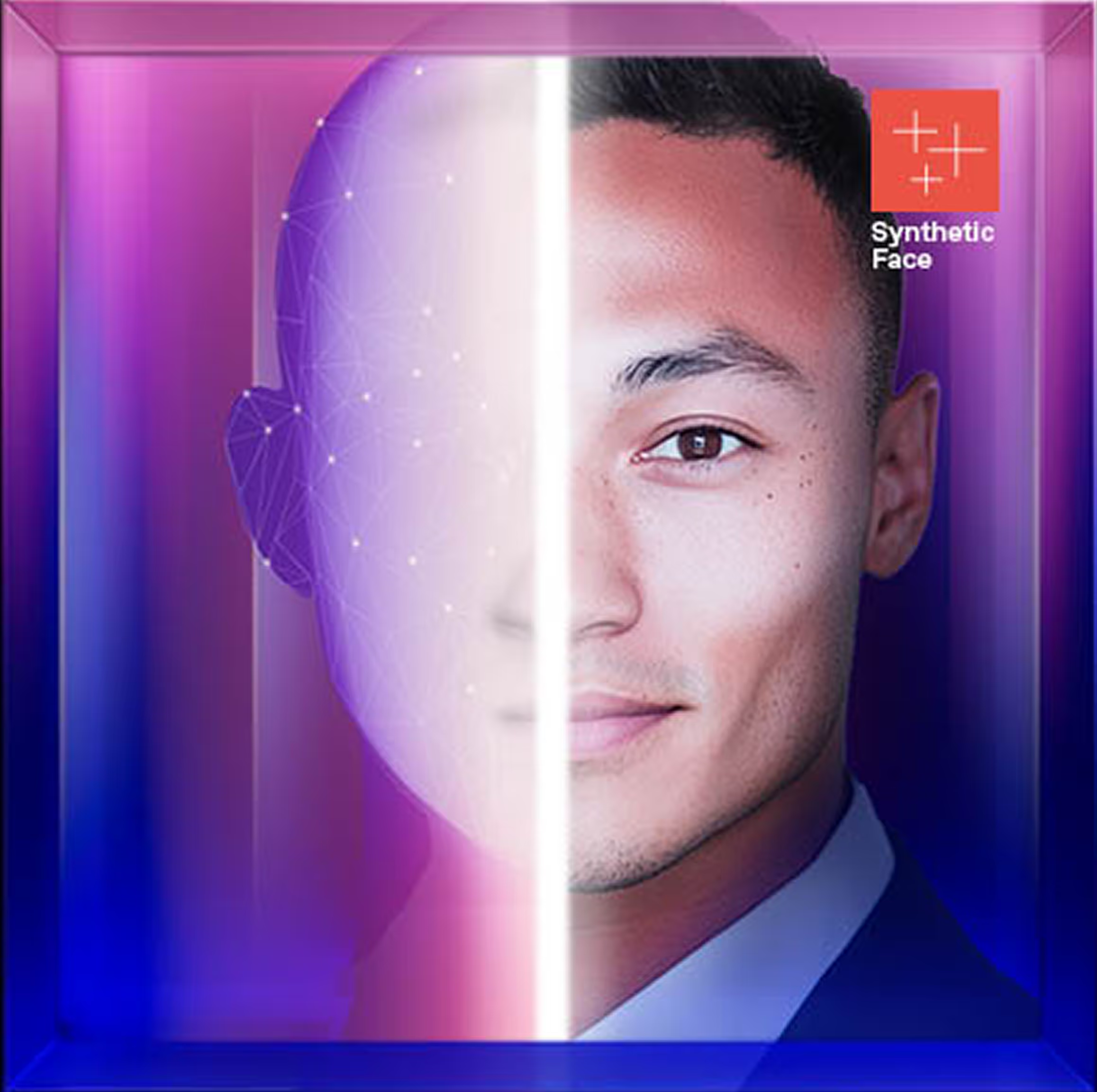

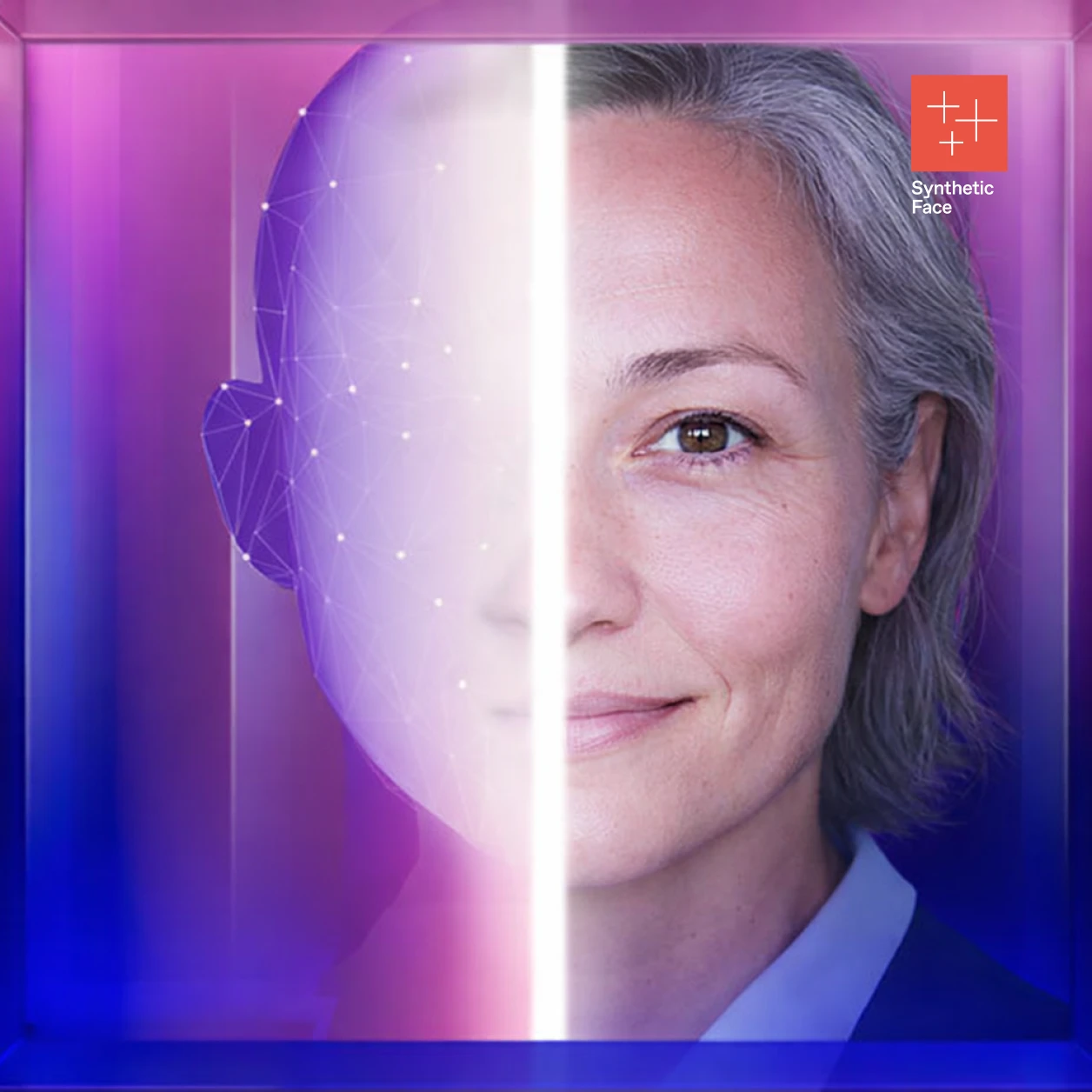

Your workforce may already include people hired under fake identities. Imposter candidates are actively targeting remote hiring workflows using synthetic identities and real-time deepfake video and audio.

AI has made fake personas — assembled with photos, resumes, video presence, and spoken responses — dramatically more convincing and easier to iterate at scale.

When hiring workflows treat video interviews as identity verification, impostors gain access with minimal friction. This accountability is increasingly placed on the HR function.

What Prepared HR Organizations Are Doing

- Implement continuous identity verification that goes beyond video interviews to include biometric checks

- Train recruiters and hiring managers to recognize deepfake red flags in interviews

- Require third-party recruiters to implement identity verification standards and audit trails

- Coordinate with IT and Security to monitor onboarding anomalies post-hire

- Expand background checks to include direct employment history validation

Treat identity verification as a critical control in hiring and onboarding, not an assumed outcome of video interviews.

Learn more by downloading our detailed guide here