Related

Synthetic Identity Fraud & Deepfakes: Lessons from the 2026 Deepfake Summit

10 minute read

"You already have a large number of synthetic identities enrolled at your institutions today." That is one of the observations that really stuck with me from this week's Deepfake Summit in Houston, Texas, which GetReal Security was proud to sponsor. The Summit brought together deepfake researchers and vendors with finance industry professionals who fight fraud at consumer banking, lending, and payment institutions.

How Do Deepfakes Defeat eKYC Checks?

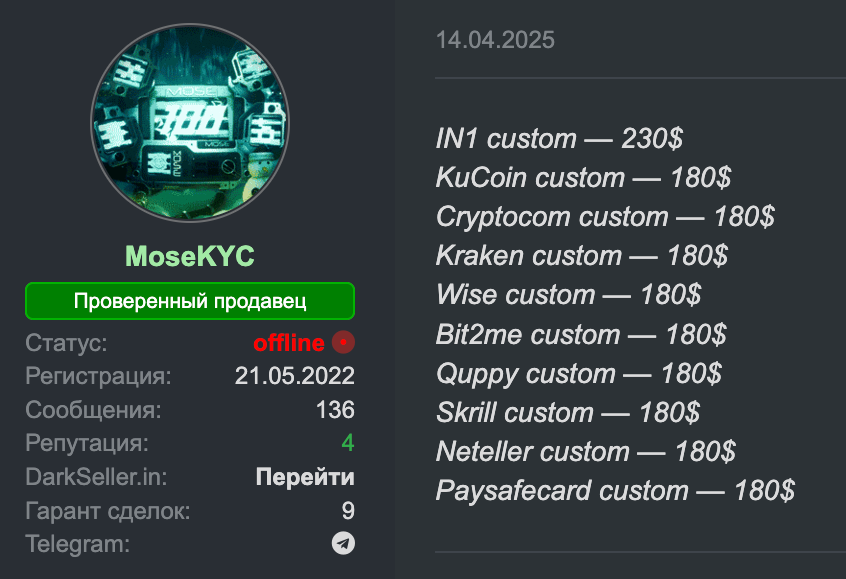

Over the past few years there has been exponential growth in the malicious use of deepfakes to fool the Electronic Know Your Customer (eKYC) checks that are employed by online banks and cryptocurrency exchanges to verify customer identities at enrollment. These checks work by asking users to present their government issued IDs and a selfie, and AI can generate both of those things convincingly. I've seen "KYC validated" bank accounts for sale on darkweb forums for $150-$200 each. The buyers are likely involved in money laundering, but attendees at the Deepfake Summit described longer term, more meticulous fraud operations.

Data breaches allow fraudsters to obtain a full profile of an individual, including all their personal and financial information, along with their AI generated persona and identity documents. These synthetic personas will apply for loans, and then pay them off, working for years to build up credit in preparation for the big score.

How Do Synthetic Identities Build Credit Before Striking?

Panelists at the Summit noted that startups in the fintech space are measured by account volume, so they don't necessarily have the financial incentive to carefully scrutinize paying customers who may be synthetic. After building up a strong credit rating, these synthetic identities move up the chain to larger institutions who can afford to make bigger loans. That’s when the payments stop coming.

There seemed to be broad agreement within the audience with the sentiment that "one and done eKYC is not sufficient" and more continuous means of monitoring and verification of identities is needed. This is true in the context of consumer banking, but we also think that it is true for workplace identity verification, where the same sort of technologies are increasingly showing up.

How prepared is your organization for deepfake identity attacks? GetReal’s Deepfake Readiness Report reveals how exposed enterprises really are and what leading organizations do differently. Download the report →

How Can Digital Government IDs Prevent Synthetic Identity Fraud?

Attendees were very interested in the adoption of digital IDs. In some countries, the government issues cryptographically verifiable credentials to individuals, who have to show up in person to obtain them. These are viewed as highly reliable proofs of identity, but US financial institutions have no choice but to do business with people who don't have them.

The TSA's website lists 21 states that offer these IDs. My state does, but I don't have one. Do you?

For years, people have imagined a future when reliable, internet compatible human identification was ubiquitous. It is interesting to think about the obstacles to and drivers of adoption of these government digital IDs in the United States and try to imagine where this is all going.

State laws might motivate adoption. Governments are increasingly requiring social networks and even operating systems to perform age verifications of users. Notwithstanding questions that are being raised about the wisdom of these various requirements, complying with an Internet age verification request might be easier to perform if your government identification is loaded into your mobile device.

Another driver might be AI agents. Several competing protocols have emerged for users to provide cryptographic authorization to agents acting on their behalf. Payments are an obvious first use case, but there will be lots of others, and verifying that a particular agent is authorized by a real human being may require a government-issued digital credential in the future.

One panelist complained that she couldn't easily enable her chatbot to access paywalled resources on the Internet on her behalf. Of course, giving all your passwords to a chatbot is going to end poorly. There need to be standards that allow agents to access secure resources without bearing a credential they could be talked into sharing. There will also have to be a protocol for out of band verification with the end user of what the agent is doing - a substitute for MFA, which must convey both the access request and what it is needed for.

I can see lots of ways to get this wrong that attackers could exploit, and I think we will be seeing deployed systems that get it wrong in a variety of ways for a long time to come. Technological advancement often involves learning about security requirements the hard way, and there will be a lot of work to do to identify and shore up security vulnerabilities in these infrastructures as they mature.

Deepfakes and synthetic identities don’t stop at eKYC. They’re already targeting your recruiters, IT help desk, and finance team. GetReal helps enterprises detect and stop these attacks before they do damage: Request a demo →

See what "no compromise" looks like on a live call.